What is a Noindex tag?

A noindex tag is a meta robots tag that blocks indexing a web page by crawlers that support it.

This tag is usually placed in the head section of a web page, or it can also be done by using X-Robots-Tag in the HTTP response header.

Remember, if you want Googlebot to follow the noindex tag, you must not block this page in the robots.txt file via a disallow directive.

The bot will not be able to crawl the page and see the noindex directive; as a result, this page may appear in SERPs (when other pages link to it).

Therefore it is necessary to allow access to the bot to crawl this page so that it can see the noindex tag and follow the instructions.

Why is the “noindex” tag important?

The noindex tag is effective for the pages that you do not want to appear in SERPs. Usually, the noindex tag is implemented on the following pages:

Admin or Login pages

Thank you pages

Tag pages

Low-quality blogs or pages

PPC Landing pages

Pages created for staging environments

Duplicate content pages

Author pages

Custom posts or pages

You prevent your private and unimportant pages from indexing, but if there are too many noindex pages on your website, it will have a negative impact on your website.

Google will spend the crawl budget crawling unnecessary pages rather than essential pages. It is therefore recommended to avoid having too many pages with a noindex tag.

Moreover, when Google sees a noindex tag, it reduces its crawl frequency gradually for this page.

Google crawls the noindex pages less often and will ultimately drop them off from the index and will not follow the links on it even if you have assigned a follow tag.

How to implement a noindex tag?

Implementing a noindex tag is quite easy. You can choose any method according to the type of content.

You can implement it in two ways if you are not a WordPress user:

<meta> tag

The first method is adding a noindex meta tag in the head section of your web page.

If you want to prevent all the search engine crawlers from indexing a web page, just place the following code in the head section of this page.

<meta name="robots" content="noindex">Alternatively, if you only want to prevent a particular bot from indexing this page, you can add the code like this:

<meta name="googlebot" content="noindex"><meta name="msnbot" content="noindex">The interpretation of the noindex tag may vary from bot to bot, so these pages may still be indexed and appear in the search results of other search engines.

Remember, Google does not support the noindex directive rule in the robots.txt file.

HTTP response header

The second method involves using an X-Robots-Tags as an HTTP response header.

This method is used for non-HTML documents like PDFs, docs, image and video files etc. It looks like this:

HTTP/1.1 200 OK

(...)

X-Robots-Tag: noindex

(...)Implementing noindex in WordPress

Implementing noindex to pages in WordPress is relatively easy. You can assign a noindex tag to any page using the Yoast plugin.

At the bottom of a page, go to Yoast SEO and click Advanced.

Now change the settings in Allow search engines to show this post in search results from Yes to No.

Things to keep in mind while using the noindex tag

If you want to assign a noindex tag to your pages, here are a few best practices to help you implement this tag effectively.

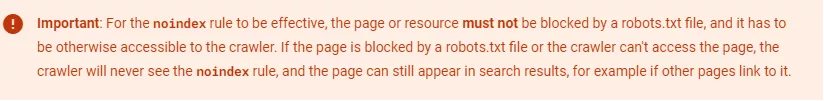

Don’t use the noindex tag with the disallow directive in robots.txt

The purpose of the noindex tag is to prevent specific pages from appearing in search results.

Sometimes, the pages will still appear in SERPs if there is a disallow directive in the robots.txt file.

Google will never see the noindex rule because it is not allowed to crawl them. As a result, these pages may appear in search results without crawling if they get backlinks from other sources.

As Google said:

Long-term noindex means nofollow

If pages are assigned with a noindex tag rule, the long-term implementation will result in the nofollow of internal links as Google will start ignoring these links.

So, having a long-term noindex means that any important link on that page will be ignored by Google despite having the follow tag, i.e.

<meta name="robots" content="noindex, follow" />Noindex tag vs canonical tag

If there are duplicate pages on your website, you can tell Google which page version is preferred by assigning a canonical tag. This tells Google that this page is canonical out of many duplicate pages.

Instead of using a noindex tag on duplicate pages, it is ideal to assign a canonical tag, as Google says it prefers canonical over a noindex tag.

This tells search engines to index this preferred or canonical version and consolidates all the link signals towards that page.

<meta> tag vs X-Robots-Tag

There are two ways to implement a noindex tag, i.e. <meta> tag or X-Robots-Tag.

Both are good and can be used according to the convenience of webmasters. However, the noindex <meta> tag is much easier to implement than X-Robots-Tag, especially if your website is on WordPress.

FAQs

Does noindex decline crawl frequency?

Crawl frequency refers to how often Google crawls a website or its pages. Implementing a noindex tag for specific pages on your website will decline the crawl frequency for these pages.

Google will return to see if you have removed a noindex tag or fixed the error preventing the page from indexing. If this tag still exists, Google will recrawl it a few times. Eventually, it will lengthen the time to crawl this page.

What is noindex nofollow?

Sometimes, the noindex tag is used with a nofollow tag like this:

<meta name=”robots” content=”noindex, nofollow”>This is done to stop the bots from following the links on this page and passing the link equity. However, adding nofollow does not guarantee that these links will not be crawled and indexed.

Can I use noindex with disallow?

If you want to prevent pages from appearing in search results, ensure these pages are not blocked with a disallow directive in the robots.txt file.

Google will not be able to see the noindex tag, and the pages may still appear in SERPs. In short, your noindex tag will be of no use here.