Key Takeaways

OpenAI introduces a new web crawler named GPTBot for managing site crawling.

Its purpose is to collect data to improve AI models.

Users can control GPTBot's access using robots.txt.

OpenAI has revealed details about its web crawler named GPTBot. GPTBot goes from website to website and collects data from the pages it visits.

The purpose of GPTBot is to gather information from across the internet. OpenAI will use this data to improve their artificial intelligence models.

AI models need lots of data to learn from when they are created and updated.

Website owners can control whether GPTBot is allowed to visit and collect data from their site. They do this by editing "robots.txt".

OpenAI has provided comprehensive documentation for GPTBot, which can be accessed on its official website.

User-agent token: GPTBot

Full user-agent string: Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; GPTBot/1.0; +https://openai.com/gptbot)If a website owner wants to block GPTBot from their entire site completely, they can add some code to the robots.txt file telling GPTBot is not allowed anywhere on that site.

User-agent: GPTBot

Disallow: /If you only want GPTBot to avoid certain sections or pages, you can specify those sections in the file as being disallowed for GPTBot.

User-agent: GPTBot

Allow: /category-1/

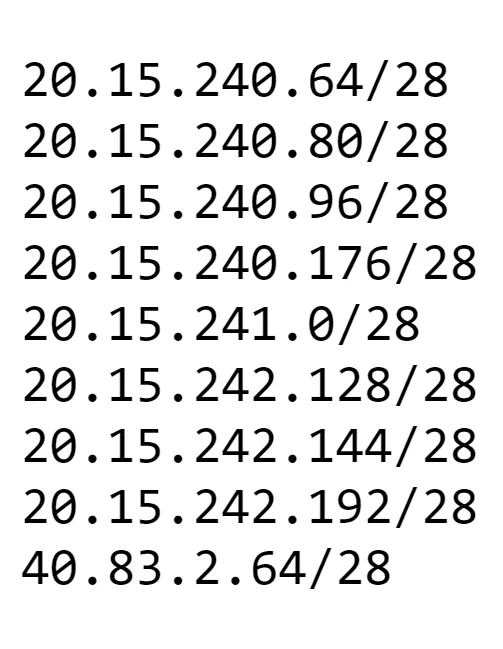

Disallow: /category-2/Right now, GPTBot operates and visits websites within a certain range of Internet Protocol (IP) addresses - 40.83.2.64/28.

The IP range GPTBot uses could change over time, so website owners need to keep checking for updates on the new IPs GPTBot uses.

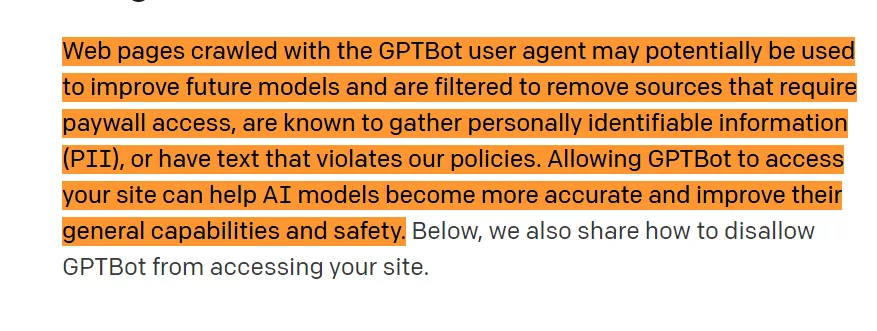

OpenAI has disclosed that GPTBot's primary purpose is to enhance future AI models by aggregating data from pages it crawls.

OpenAI has published detailed instructions and documentation explaining exactly how GPTBot works and how website owners can manage and control GPTBot's access if they want to limit its crawling.

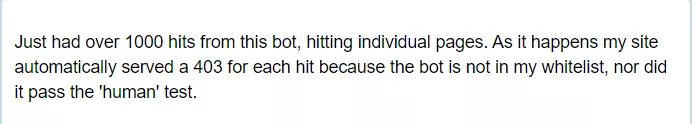

However, some websites have already had issues with GPTBot excessively crawling and visiting too many of their individual pages. These sites had to take steps to block GPTBot's access because it was doing too much crawling.

Google and other major companies are now working on creating new protocols and technology standards as alternatives to robots.txt.

These new methods would be designed specifically for controlling access to AI-powered web crawlers and search engines. The current robots.txt protocol may not work as well for the latest AI systems.